SCABOR

Stereo Camera Based Object Recognition System for Vehicle Applications

Project founded by Volkswagen AG, Germany (2001-2004)

Introduction

Automotive companies are investing a lot of interest in intelligent systems which can assist the driving process. Radar and laser sensors based applications are dedicated for precise pose measurements but they cannot give a complete description of the driving environment due to their nature. Vision sensors can compensate the information lack of their measurements. Stereovision has been proved the only method able give reliable results both for measurements and description of the driving environment.

Objectives

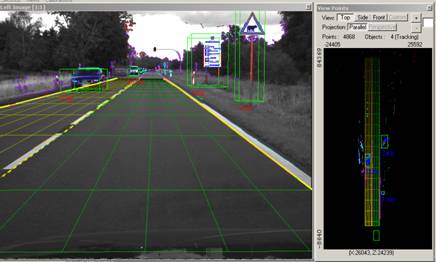

Development of a real-time stereo camera based system able to give a complete description of the driving environment. The system is optimized for highways but works well also on country roads or town scenarios.

|

Marginal conditions • detection range: 100m • maximum speed: 180km/h • processing speed: 10 fps Mean features • stereo approach • 3D lane detection on flat and non-flat roads with or without lane markings • object detection and tracking (in terms of position, size and speed) • side lanes and driving area detection |

|

Methodology

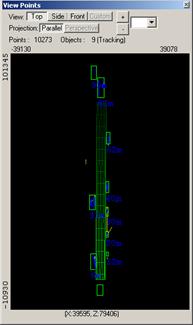

The acquisition system is composed from two digital b/w cameras mounted on a stereo-rig. The cameras and stereo-rig parameters are calibrated using a dedicated methodology, optimized for far-range stereovision requirements. Stereo reconstruction is performed on edge features. Features belonging to lane delimiters are classified and their 3D coordinates are used in the lane detection process. The 3D points above the detected road surface are grouped into objects, taking into account the vicinity criteria, density variation with the distance, and 2D image information such as similar texture and connecting edges. Tracking using Kalman filtering is used both for lane and objects parameters.

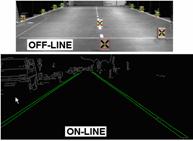

AchievementsCamera calibration

• Robust off-line camera calibration techniques and methodologies for

far range stereo were developed: off-line methods using calibration

objects with known structure (fig. 1- up);

Stereo-image acquisition system

High resolution and far distance stereovision

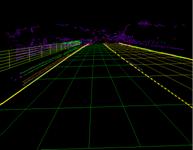

3D lane detection

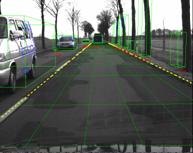

Object detection and tracking

Concluding remarksWe have developed a system based on stereovision able to perform in real time many tasks needed for driving environment description and measurements. The system functions are integrated into a dedicated stereovision framework, which can be easily extended with other capabilities (e.g. image analyzes tasks) or to build a specific application for an active security or driving assistance system for automotive industry.

Our servicesThe obtained expertise of the research team has reached the state-of-the-art level in some specific fields of the computer vision usable in robot or automotive applications: camera calibration, high-resolution stereo reconstruction, stereo measurements, object detection and tracking, 3D lane detection, stereo image acquisition systems (control, programming, design).

Recent publicationsS. Nedevschi, F. Oniga, R. Danescu, T. Graf, R. Schmidt, "Increased Accuracy Stereo Approach for 3D Lane Detection", Proceedings of IEEE Intelligent Vehicles Symposium, (IV2006), June 13-15, 2006, Tokyo, Japan, pp. 42-49. T. Marita, F. Oniga, S. Nedevschi, T. Graf, R. Schmidt, "Camera Calibration Method for Far Range Stereovision Sensors Used in Vehicles", Proceedings of IEEE Intelligent Vehicles Symposium, (IV2006), June 13-15, 2006, Tokyo, Japan, pp. 356-363. S. Nedevschi, R. Danescu, T. Marita, F. Oniga, C. Pocol, S. Sobol, T. Graf, R. Schmidt, "Driving Environment Perception Using Stereovision", Procedeeings of IEEE Intelligent Vehicles Symposium, (IV2005), June 2005, Las Vegas, USA, pp.331-336. S. Nedevschi, R..Schmidt, T. Graf, R. Danescu, D. Frentiu, T. Marita, F. Oniga, C. Pocol, "3D Lane Detection System Based on Stereovision", IEEE Intelligent Transportation Systems Conference (ITSC), 2004, Washington, USA, pp. 161-166. S. Nedevschi, R. Danescu, D. Frentiu, T. Marita, F. Oniga, C. Pocol, Thorsten Graf, Rolf Schmidt, "High Accuracy Stereovision Approach for Obstacle Detection on Non-Planar Roads", IEEE Inteligent Engineering Systems (INES), 2004, Cluj Napoca, Romania, pp. 211-216. S. Nedevschi, R. Danescu, D. Frentiu, T. Marita, F. Oniga, C. Pocol, R. Schmidt, T. Graf, "High Accuracy Stereo Vision System for Far Distance Obstacle Detection", IEEE Intelligent Vehicles Symposium, 2004 (IV2004), Parma, Italy, pp. 292-297. |

|